Security Operations Centers (SOCs) are very interesting and engaging places to work that can deliver great organizational value. The problems that they face, both internally and externally, are both interesting and fun to solve.

Table of contents

Open Table of contents

What is a Security Operations Center?

I consider MITRE’s 11 Strategies of a World-Class Cybersecurity Operations Center to be a seminal, authoritative work on what a SOC is, what its core functions are, and, as the name suggests, the strategies to employ to be an effective SOC. Since 2022, MITRE has defined a SOC thusly:

A SOC is a team, primarily composed of cybersecurity specialists, organized to prevent, detect, analyze, respond to, and report on cybersecurity incidents.

In 2014, under the original release (10 Strategies, instead of 11), a SOC was defined as a team that engages in CND (computer network defense) as described in Committee on National Security Systems Instructions No. 4009. This definition is roughly the same, though less friendly to read:

The practice of defense against unauthorized activity within computer networks, including monitoring, detection, analysis (such as trend and pattern analysis), and response and restoration activities*

Core SOC Functions

For larger or wealthier organizations (e.g., the DoD, the finance industry) and security vendors, there is often more opportunity for distinct roles in a SOC to emerge and be showcased. The different functions that a generalized SOC fulfills — monitoring, threat hunting, detection engineering, cyber threat intelligence, and incident response — are often broken out into their own distinct roles.

Monitoring Analysts

When people think of a “SOC,” this is the role they have in mind. Someone with eyes-on-glass monitoring of the alerts that are generated by AV, EDR, NIDS, custom alerts from the detection engineering function, etc. In a tiered SOC, someone in this role is often called a “level 1” analyst. These are often 24/7 shift-based roles. Investigations may rarely go “deep,” other than identifying whether an alert is a false positive, a benign positive, or a rare true positive. For a true positive, initial “triage” data might be collected (e.g., network logs and a KAPE collection) before being forwarded on to an Incident Response function.

It is, ultimately, the most important function of a SOC. If you see a job posting for a “SOC Analyst,” there’s a very high likelihood that this is what that role is. Even if an organization has no capacity for detection engineering, threat hunting, threat intelligence, or even incident response, it’s still critically necessary for someone to be listening to the alerts that fire from the expensive security tooling deployed throughout the network.

Picture related.

Detection Engineers

Detection engineers work with every other SOC function to create detection rules to generate alerts with as much value, fidelity, and context as possible. It is very important for detection engineers to have a good working relationship with the analysts who work with the alerts spawned by the detection rules they have created. One of the greatest challenges SOC analysts have historically faced is alert fatigue. When alerts are too frequent and have too high a false/benign positive rate, the misery index for individual SOC analysts dramatically increases and the quality and thoroughness of investigations decreases (this is an unavoidable consequence).

Detection engineers may also interface with threat intelligence functions to create detections based on new/emerging threat behaviors, tying specific activity to specific threat groups, or common trends and behaviors. Sometimes (often times), intelligence received may relay what generally happens in a particular attack, but not how that attack presents itself in available telemetry. In these cases, detection engineers may recreate attacks in a detection lab environment with verbose logging enabled. They can do this themselves or in collaboration with an adversary emulation or purple/red team function.

Incident response, too, benefits from interaction with detection engineering functions. Detection engineers, by the nature of their job, are very good at understanding and leveraging tools used for detections. They are generally subject matter experts on data sources and detection languages such as SIEM-specific query languages, Sigma, and YARA. If an incident responder identifies something (e.g., a process chain that indicates a system compromise), quick consultation with a detection engineer is a great way to 1. set up monitoring for all future instances of that behavior and 2. hunt for indicators of that behavior in existing evidence sources.

Detection engineers and threat hunters are two sides of the same coin, and if they’re not the same persons/teams, they should absolutely be talking to one another. Google famously pioneered a “hunt once” policy, wherein any meaningful threat hunt would be converted into a long-term detection rule such that the hunt would never have to be repeated. I don’t think that is always possible (or a good idea), but I am a big fan of eliminating redundant work.

Threat Hunters

Threat hunters hunt… for threats. Threat hunting is a loaded and contentious term, but I defer to the same definition as Chris Sanders:

Threat hunting is the human-centric process of proactively searching through networks for evidence of attacks that evade existing security monitoring tools.

Chris also categorizes threat hunting into different categories: attack-based hunting (ABH) and data-based hunting (DBH). ABH is spawned from a hypothesis (e.g., a malicious backdoor scheduled task has been created on our network) and involves for looking for evidence that either supports or refutes that hypothesis (e.g., enumerating scheduled tasks across the environment to identify anomalies). DBH is spawned from analysis of data (e.g., DNS request logs) to identify any potentially suspicious anomalies (e.g., DNS requests for domains with gibberish/random names). To learn more (and get your hands dirty with real, hands-on threat hunting labs), I recommend checking out Chris’s Practical Threat Hunting Course.

Assuming you have a SIEM, most threat hunting looks like detection engineering for a SIEM. A SIEM is going to be the place that stores the most security-relevant telemetry most likely to contain evidence of potentially suspicious activity. A high-fidelity threat hunt in a SIEM can be converted into a long-term continuous detection opportunity (à la Google, as mentioned earlier).

Regardless of where threat hunting is happening (e.g., SIEM logs or forensic artifacts gathered at scale using a tool like Velociraptor), it is, ultimately, an exercise in baselining. The first step in hunting for a malicious scheduled task? Ruling out the 99.99% of scheduled tasks that are known/likely benign. Effectively filtering out noise while reducing potential blind spots is a very delicate art in both detection engineering and threat hunting. My general rule on tuning is to be as-precise-as-possible. Instead of, for example, tuning out rundll32.exe because it occupies 99% of the ProcessName field in your logs, be more precise and filter out invocations of rundll32.exe that are targeted to C:\Windows\System32. After all, an invocation of rundll32.exe targeting a known signed Microsoft DLL file in C:\Windows\System32 is very different from one targeting a file in C:\Users\Bob\AppData\Local\temp.

Incident Response Analysts

People like to think of Incident Response analysts as similar to a SWAT team. When a user clicked on a bad link, the web proxy permitted the traffic, the EDR didn’t stop the payload from executing, and now the endpoint is doing things no endpoint ever should… It’s time to call in the big guns. Incident response analysts generally thrive on adrenaline and energy drinks. IR engagements can be short and sweet (e.g., box quarantined + credentials reset + no indicators of lateral movement) or long and painful (e.g., warding off ongoing exploitations of a zero day vulnerability in an application the organization has deemed business critical and thus impossible to take offline).

IR analysts are intimately familiar with not having enough time, data, or adequate tooling to solve impossible problems. They often find that the logs they need for a particular case are not available in a SIEM due to an undetected log outage, the bespoke nature of the incident, or the fact that the logs in question were just too expensive to keep. Instead, they often work with raw logs, traditional disk-based artifacts, memory captures, and other less-than-ideal pieces of evidence. The tools they use to process these range from the simple (grep and awk) to the sophisticated (Cellebrite, Magnet Forensics, Velociraptor).

Cyber Threat Intelligence Analysts

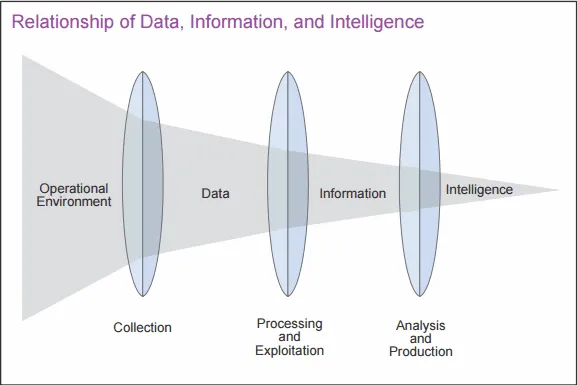

Cyber Threat Intelligence Analysts have a difficult problem. They need to collect and translate data into complete, accurate, relevant, and timely intelligence. The diagram below from Joint Intelligence: Joint Publication 2-0 summarizes this nicely:

Implementation of CTI varies widely across organizations. Broadly, CTI analysts endeavor to deliver useful intelligence to stakeholders to help them better make decisions. At a tactical level, this can be very useful to a SOC by way of feeding indicators of compromise (IOCs) to enable threat hunters and detection engineers. At an operational level, this can help those same stakeholders understand broader tactics and techniques being employed by threat actors, as well as what threat actors are most relevant to an organization. At a strategic level, the C-Suite can be informed on what the broad threats to an organization are, which can inform future strategic decisions in an organization’s structure and architecture.

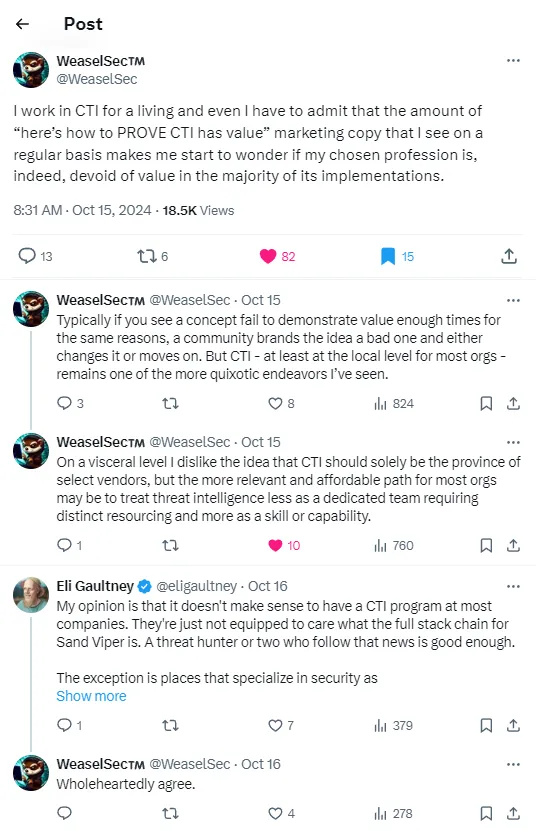

CTI, as a concept, is often very unsuccessful. I personally believe this is simply because humans are bad at intelligence. I think there are too many intermediary steps with judgment calls between data ingestion and potential action to ensure a consistent high-quality outcome. I have seen many CTI program attempts flail about in failure and despair. I think a lot of this failure stems from the baggage that the word “intelligence” has accumulated over the century of its relevance in the United States. A lot of folks who view themselves as working in capital “I” Intelligence have extremely strong opinions on what intelligence is, what it is not, how to do it, and how not to do it. They’ve read Psychology of Intelligence Analysis by Richards Heuer. They’ve familiarized themselves with decades of intelligence operations in Western military operations. I think there is a tendency for CTI analysts to become very divorced from what provides organizational value in pursuit of doing Real Intelligence Work (TM).

WeaselSec, who works in CTI, has an X thread with which I strongly resonate:

Still, CTI can be an awesome and valuable role to work in. I think any SOC that is not leveraging some form of threat intelligence (start with simple indicators!) is doing it wrong. There’s a reason that Strategy 6 of 11 Strategies of a World Class SOC is “Illuminate Adversaries with Cyber Threat Intelligence.”

Other SOC Roles

The previously discussed roles are just the ones that I’ve seen most often. But, seemingly, for every SOC that exists, there is also another permutation of a SOC with different niche roles. Other roles I’ve also seen include:

- “Tool Maker” - Some SOCs are fortunate enough to have people whose entire purpose is to write tools/scripts for other members of the SOC. This may include, for example, PowerShell collection scripts used in incident response triage.

- Vulnerability Management Analyst - Many SOCs have vulnerability management as a function within them. This is one of the most important security roles in an organization.

- Automation Engineer - If a SOC is using a Security Orchestration Automation and Response (SOAR) platform, it probably has system administrators who have a purpose to maintain and build out functionality within the SOAR. If your organization leverages SOAR but is not willing to invest in engineers dedicated to maintaining/building it out, good luck.

- Red/Purple Team Functions - Most folks I speak with indicate that any/all red team members are very separate from their SOC functions. However, this is not universally true, and having in-team relationships between red and blue can result in great outcomes when done right (e.g., a positive, collaborative, non-competitive relationship exists).

- System Administrator - for SMBs, a SOC typically serves as both system/network administrators and a SOC. It is not uncommon, for example, for a SOC to manage firewall, IDS, AV, etc. themselves.

Security Operations Centers Versus Incident Response Teams

I sometimes get into debates with industry peers on what a SOC is and if it is something that is different from another popular industry term: a Cyber Security Incident Response Team. While I defer to MITRE’s definitions of a SOC as authoritative (which suggest that they are the same thing), I do believe a SOC and an Incident Response (IR) function can be two different things. Where most of a SOC’s functionality is focused on the activities that occur before an incident (detecting, analyzing, monitoring), the response steps that occur after an incident is declared are entirely their own beast.

I think that a clear example of the distinction between these two functions can be illustrated in the differences between SANS.edu’s graduate certificate programs for “Cyber Defense Operations” and “Incident Response.” The required core courses for Cyber Defense Operations are focused on threat detection and monitoring (SEC511) and defensible security architecture (SEC530), whereas the required core courses for Incident Response cover Windows artifacts and traditional digital forensic tools (FOR500), incident response-specific forensics and tooling (FOR508), and network forensics (FOR572).

What languages does a SOC speak? A SOC speaks SIEM. A SOC speaks NIDS. A SOC speaks time-to-detect. A SOC speaks to a SOC lead. What languages does an IR team speak? IR speaks Windows artifacts. IR speaks raw log files. IR speaks awk, sed, and grep. IR speaks to the C-Suite.

However, for most organizations, an IR team and a SOC are the same thing. If an SMB (most organizations) is lucky enough to have a SOC/IR function, it is rarely more than six people. And it is even rarer for that function to exclusively perform IR/SOC work. Generally, they’re also network/system administrators for things like firewalls or web proxies, HR/Compliance violation investigators (e.g., was Bob doing something on his computer that he should not have been?), and get called in as the “big guns” whenever there is any variant of a confusing, widespread IT issue. In short, for most organizations, from cradle to grave, IR and SOC are one and the same.